Research: Optimising Gigabyte of Pepper&Carrot images

Here is a technical post where I explain my choices and process in the move from storing the high-resolution PNG@300ppi-lossless to JPG@300ppi-compressed@95% for optimization and performance.

The problem

Hosting 20GB of Hi-Resolution PNG to propose print quality for all the languages is a lot of work to maintain, for a service rarely used. But delivering this 'pre-build' 300ppi version of the comic is something I want to keep, as I believe Pepper&Carrot is a rare ( if not the only ) webcomic to offer this type of service.

Each pages ( 2481x3503px ) rendered as PNG lossless weight more than 10MB. An episode with all current languages represents around 300 pages. So each time a typo, a correction, or a change is done over a page, it's Gigabyte of data to process and upload again. That's why I'm perfecting day after day a set of computer bash scripts to do it, and task for my computer to work alone at night. Today, with 12 episode and 25 languages, I can still manage... Not exactly ; someday at morning, the uploading jobs started the night before is not finished. This affect my productivity as my Internet connexion is slow for half a day. What my actual situation would be with the double of episodes and translations files? Certainly a lot of maintenance, but it also can certainly happen sooner as I expect. Pepper&Carrot is growing at light-speed, and my days are still made of the same 24h. Too much maintenance is a real problem: I want to keep control and free the most time to produce comics, drawings and artworks. That's why I invest time in perfecting my computer bash scripting skill : only automation and optimization can save me time.

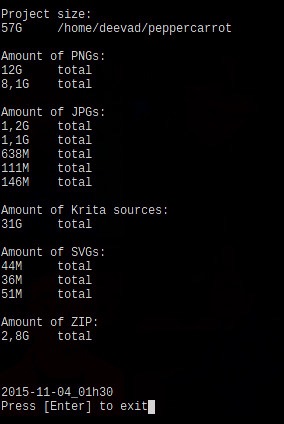

my bash script 'filewalker' doing a report on file weight

Solutions

One solution to solve this problem was to check how to reduce the file weight of the PNG hi-resolution lossless for print. 20GB can certainly be compressed. I made many test with cWebP, Pngcrush, Optipng, Pngquant, Imagemagick, Graphicmagick, Gmic, GDlib to check the best solution to provide high-resolution files ready for print over internet. Also, I wanted to study this change in depth to be sure I'll not do a big switching effort for nothing.

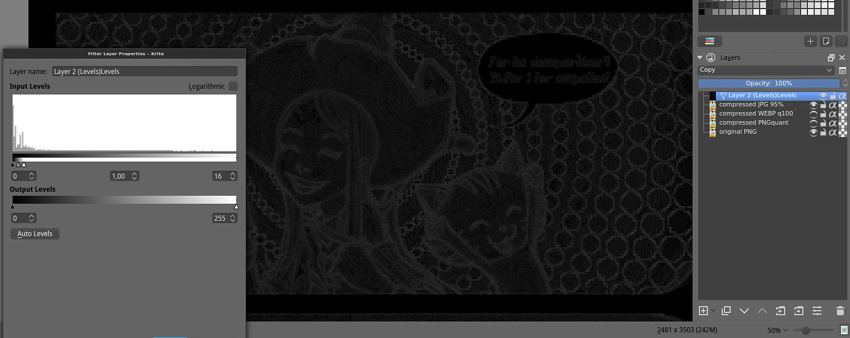

My requirements are simple: I don't mind the file to be a bit altered from the original artwork, the compression can be lossy but it has to be subtle. Subtle to the point I can't see the difference when zooming on both files : PNG lossless on one side, and compressed version on the other side. I used Krita and the Tiling Windows to zoom and compare. I also put the two version each time as layer with the difference blending mode. This blending mode compare the pixel colors, return pure black if nothing is changed, and brighten with an intensity depending the amount of difference between original pixels, and the one in compressed version. I also added on the top of the layer-stack a Filter Layer , with increased Levels. This filter highlight the amount of compressions noise. The compression noise was then something I could appreciate and compare with my eyes :

The rig in Krita: comparing in the stack with Difference blending mode. The grey information reveals ( thanks to the Level dynamic filter ) compression artifacts and changes for human eyes; real result of 'difference blending mode' are 90% more subtle.

Thanks to this methodology and tools, it was easier to build my final choice on a real study. Here are my notes while I make the test :

PNG Optimisation: OptiPNG and PNGcrush took around 10min per pages with full usage of all thread and CPU of a icore7 8 core 2,4GHz machine. They changed a 10,5 MB into a 9,2MB page while keeping the same extension and the lossless perfect quality. This type of compression cost too much in time, electricity and the result file-weight is still too high.

WebP: I studied the proposition to do almost lossless files with Webp, using option as -q 100 , and the --preset 'photo'. I was happy to can compare WebP in Krita ( yes, Krita can open them ), but my difference blending mode revealed a really high level of artifacts of compression. With default settings ; cWebp rendered the page to 0.5MB but with a really potato compression aspect. With best settings, ( -q100 and -preset photos ) I was able to obtain a 3MB file. But this file had too many compression artifact. Also, Webp is not supported by all browser or image editor. I would adopt it if the compression artifact was competitive to JPG and if the final weight was also an improvement. But for 'near-to-lossless' this promising technology is not adapted.

JPG: I studied JPG with many libraries and obtained best result in terms of speed, quality and compression with Imagemagick. JPG is compatible with every applications and the king image format of the Internet since more than a decade. First, the 'blur' option is far too destructive. Progressive encoding has advantages to display the hi-resolution pages and almost no impact on filesize, so I kept this option only for hi-resolution. I studied all compression from 92% to 100% , and compared the noise compression with my method described on the picture above. The result : on hi-resolution from 100% to 95% the compression noise is not noticeable. The artwork is not affected at all, nor the colors. The noise don't build any blocks, and don't make a difference with the natural artwork's noise. Render is really fast and file weight go down to 26% size of original. This is my solution.

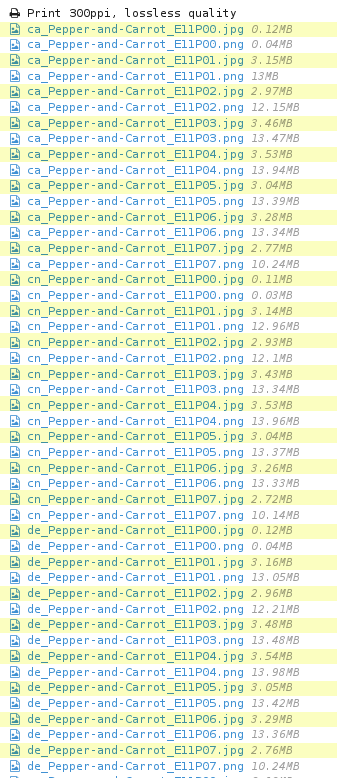

While uploading the files, PNG and JPG were side to side. You can compare the file size on this screenshot.

While uploading the files, PNG and JPG were side to side. You can compare the file size on this screenshot.

Here is my table to sumarize the tests :

| filetype | software/options | size | notes |

|---|---|---|---|

| PNG | imagemagick | 10,5MB | lossless ( original ) |

| PNG | pngcrush | 9,2MB | lossless, 10min too long |

| WEBP | cwebp default | 505KB | potatoe looking-like |

| WEBP | cwebp -q100 | 2,9MB | too much artifact |

| WEBP | cwebp -q100 -preset photo | 3MB | too much artifact |

| JPG | imagemagick 99% | 6,4MB | ok |

| JPG | imagemagick 98% | 4,7MB | ok |

| JPG | imagemagick 97% | 3,9MB | ok |

| JPG | imagemagick 96% | 3,4MB | ok |

| JPG | imagemagick 95% | 2,9MB | ok |

| JPG | imagemagick prog + -quality 95% | 2,8MB | ok ( Final choice ) |

| JPG | imagemagick 94% | 2,6MB | too much artifact |

| JPG | imagemagick 93% | 2,4MB | too much artifact |

| JPG | imagemagick 92% | 2.2MB | too much artifact |

| JPG | imagemagick 85% 0.5 blur prog | 939KB | blury potatoes looking-like |

Conclusion

convert -strip -interlace Plane -quality 95% input.png ouput.jpgHere is the final simple command-line I'm using now for managing my high-resolutions 'very-very-near-to-lossless'. I think I can be happy with what I found because I optimised everything to be 74% smaller, a huge impact on bandwith for the HD button, on downloading, and uploading.

This study concerns of course only the case of my pages ( 2481x3503px ) with all requirements I had ( Browser and app compatibility, low file-weight, no-noise but lossy a bit is ok ). I don't write this blog post to feed here a war of format between PNG, WEBP and JPG. I just regret I had to do this study alone, and nowhere on the Internet I could find an article about high resolution pictures with near-to-lossless and recommendations.

I'm making the switch right now of all the database of artworks. It took 2 hours to convert all the hi-res PNG, and more than 40h of upload to update the JPG. I also had to modify the renderfarm and the website to adapt to the new files. Not a little work. But to share 20GB of data with 5GB only , it totally worth it.

For pure lossless quality, I still propose the Krita files with all layers in the Sources category of this website, translations are still SVGs. So, the sources are intact and lossless. Just the final output can be 'very-very-near-to-lossless' to ease every online sharing of files and maintenance.